A blog about making all assessments actionable and useful for teaching and learning.

-

Teaching to the Test, Racing to the Test, or Reviewing for the Test: What are the Differences, and Why Does it Matter?

It is that time of year again. End-of-course exams are either being administered to your students or are about to be. These tests are designed to sample content from your state’s standards so policy makers can make inferences about how much of the standards each student learned this year. Students are measured at the end…

-

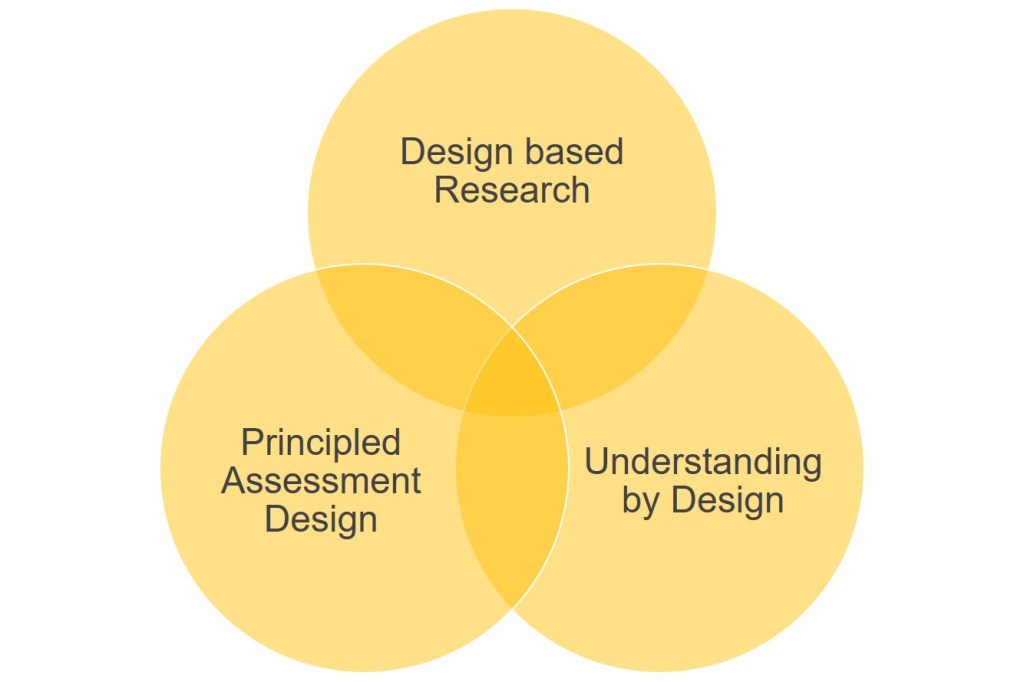

Synergizing Assessment with Learning Science to Support Accelerated Learning Recovery: Principled Assessment Design

A key aspect of formative assessment is that teachers collect and interpret samples of student work or analyze items to diagnose where students are in their learning. Busy teachers are faced with two choices. They can count the responses answered correctly by topic and move on; or stop, grab a cup of steaming hot coffee,…

-

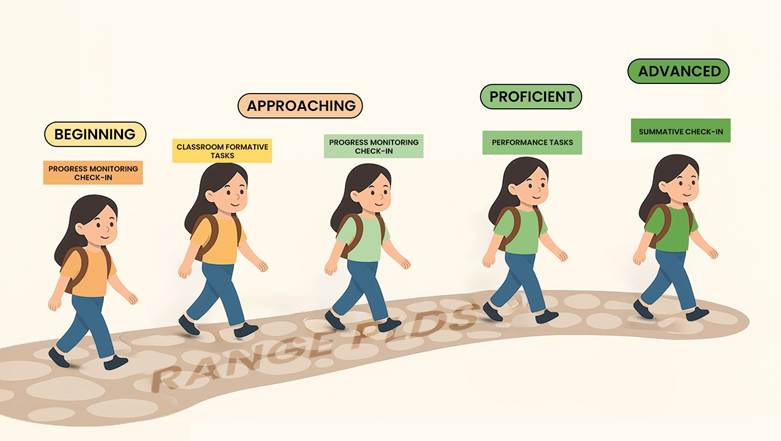

Progress Monitoring That Matters: Using Validated Score Interpretations to Evolve Balanced Assessment Systems

There is growing consensus that a single end-of-year assessment provides student learning information too late to be instructionally supportive. For this reason, roughly 25% of states are moving to or piloting some type of progress monitoring assessment system to help determine if students are growing during the year. One of the reasons I advocate for…

-

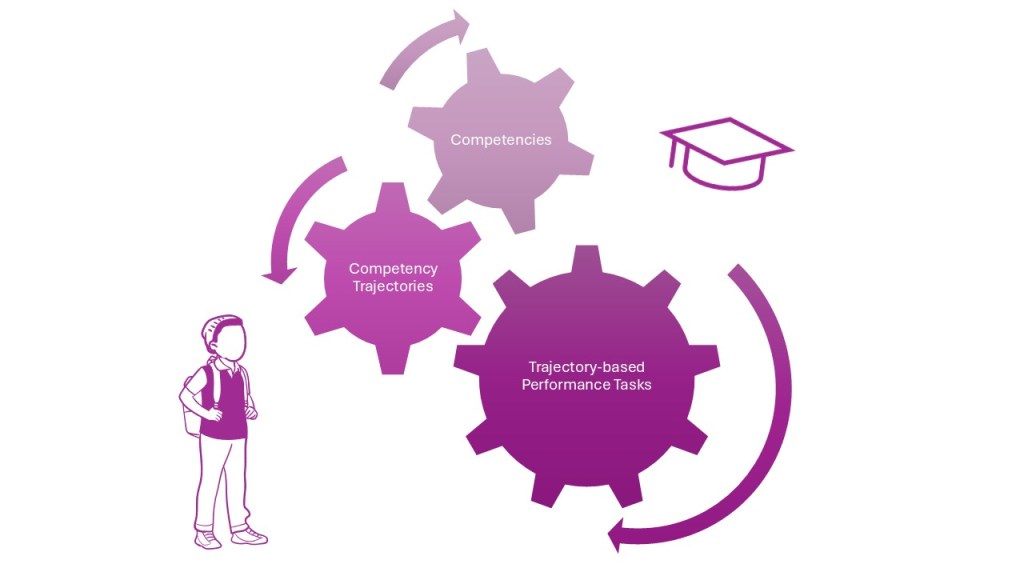

Creating a Strategic Plan for Competency-Based Education

Our education system may not be cultivating the workforce of tomorrow. A recent article in Forbes shows that many managers believe Gen-Z workers lack essential skills like problem-solving, collaboration, creativity, and the ability to engage in productive conflict resolution. As a result, these young graduates are less likely to be hired. Brandt et al. (2025)…

-

Is Your Student Stuck in the Same Stage of Learning?

Four Key Assessment Use Strategies to Increase Student Achievement M. Christina Schneider Moss and Brookhart (2019) in their book, Advancing Formative Assessment in Every Classroom: A Guide for Instructional Leaders, defined formative assessment as a teacher and student collaboration using systematic processes to (a) collect, (b) analyze and (c) take action to improve learning based…

-

A Reflection on “Breaking with Tradition: The Shift to Competency-based Learning in PLCS at Work”

Late last month the National Assessment for Educational Progress (NAEP) released results for the long-term trend assessment in mathematics. The results were not happy news. We continue to see evidence of student performance being lower now than in years and decades past, due to COVID. This decline is in spite of an “almost” normal year…

-

A Reflection Based on “Leading a Competency-based Secondary School”

Two students and a teacher talking in the hallway.

-

Market Research as Context and Evidence to Support Innovation

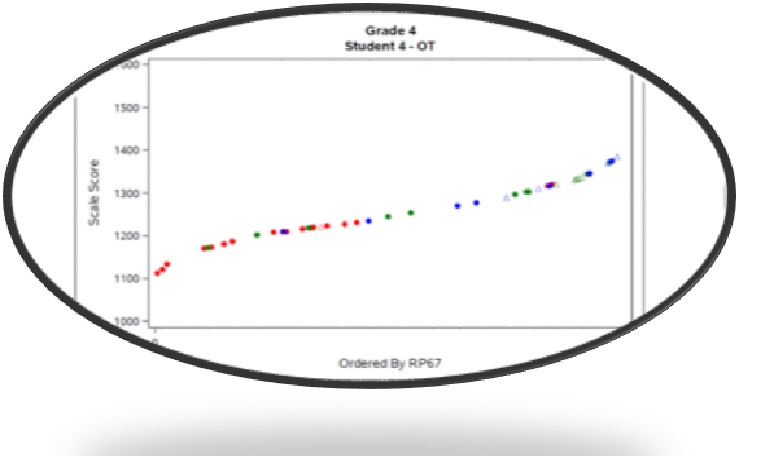

Items along a test scale should show as red, green, and blue to denote a learning progression but in the graphic readers see the messy green and blue intermixing with no clear differentiation between the middle and advanced stage.

-

K-12 Accommodation Policies in a Digital Age: Is it Time for A Change?

In the space of two years, historical educational accommodation policies for K-12 students set at the state and federal level have become increasingly difficult to implement in practice. Why in many states might guidance that accommodations used when taking a statewide assessment be the same ones the student uses during typical classroom instruction and assessment…

-

From Multiple Choice to the Beyond!

Case Studies in English Language Arts: Part 2 In the first blog of this series, I argued that when teachers use multiple-choice items as the predominate way of measuring student learning, it is difficult (to near impossible) to uncover what students are thinking. Many students enter and exit their grade in roughly the same relative…

Check out my book!