Based on a talk delivered on November 3, 2022, at Beyond Multiple Choice.

Statewide assessments are often one of the most valid tools we use to measure student learning; however, because score reports lack likely instructional actions that research evidence suggests will help students grow and because score reports are seldom returned to teachers or parents quickly, teachers find little utility or transparency in assessment results. There are increasing calls from the field for assessments to better serve teachers and students by being more instructionally useful.

Thus, there is a tension among what teachers would like assessments to do, what buyers (e.g., states or districts) describe as needs in their RFP’s, the existing infrastructure, capacity, and test development processes that are currently used across different organizations, and the decision-making processes used in the industry that sometimes privilege opinions and organizational constraints as the main decision making driver of innovation.

I advocate for principled assessment design centered in Range achievement level descriptors (RALDs). Range ALDs represent likely pathways or benchmarks in which student learning unfolds when they are built to describe the content that students can do, the cognitive complexity with which students are thinking about that content, and context, the conditions that represent when a student can show you what they know. When content, cognitive complexity, and context are embedded into Range ALDs based on state standards and merged with evidence from the learning science literature, they become a theory of learning consistent with the definitions of learning progressions found in the learning science literature.

Range ALDs built to function as learning progressions can be a bridge that connects an assessment system to teaching and learning when there’s evidence to show that the test score interpretations that we are providing are reasonably true. RALDs can help teachers understand where the student is currently in their learning with the next stage of the progression representing the likely opportunity to learn the student needs next. They show the characteristics of the types of extra instructional experiences and practice opportunities the student needs next to grow. In this example, we are seeing a Range ALD centered on the standards but also using evidence from the learning science community that shows where the evidence is located in a text relates to how difficult a question is for a student. This holds implications for teachers understanding how to direct questions strategically to students in different stages of reading comprehension.

In order to have this vision and innovation, we need to persistently embed item-to RALD alignment throughout the test development cycle.

We are re-sequencing historic test development processes to streamline the work of assessment development for efficiency, efficacy, coherence, and instructional utility. The first step of streamlining is to take the development of Range ALDs, which historically were developed at Step 7, and move them to Step 1 because these are the test score interpretations reported in Step 8. We are building items to Range ALDs, and we have evidence that we are doing this when we see Range ALDs as the task demands in item writing specifications and items tagged to the RALDs in item management systems in Step 2. This centers blueprints and item development plans on these statements in Step 3. We field test items in Step 4 and then we can immediately begin validating the Range ALDs in Step 5.

Range ALDs are often a set of hypotheses. We may use evidence from learning science, and teacher and item writer expertise to inform Range ALD progressions, but you may not have research available for every single standard, so the creators are engaging in hypotheses. At Stage 5 we are hypothesis testing. We can do this with an embedded standard setting algorithm. What do we do if we discover teachers think content becomes more difficult when data from students indicates the content is easier for students than predicted? We engage in the iteration process by editing the RALDs based on what we learn from student data and rework the item writing specifications based on this research. In Step 6 we deploy the operational assessments, and we can validate the alignment and engage in standard setting using that alignment process with embedded item-descriptor matching at Step 7, if desired to ensure instructionally useful score reports (Step 8).

What are the common initial blockers to this process? First, this is a significant change of process for content experts. It is historically more common to have items written to standards in a routinized manner that content experts are efficient with. When we ask them to change their process, this can be especially difficult when they are placed under stressful timelines with limited resources because the iteration process that we’re talking about may create additional work steps. This also affects the cost for a project for a single division even if the framework results in net cost savings downstream. A company has to work together across divisions to think about costs; otherwise, you have one division that has an idea to drive innovation, another division that has labor constraints, and another division that has different opinions of utility for teachers with no process to help resolve these tensions.

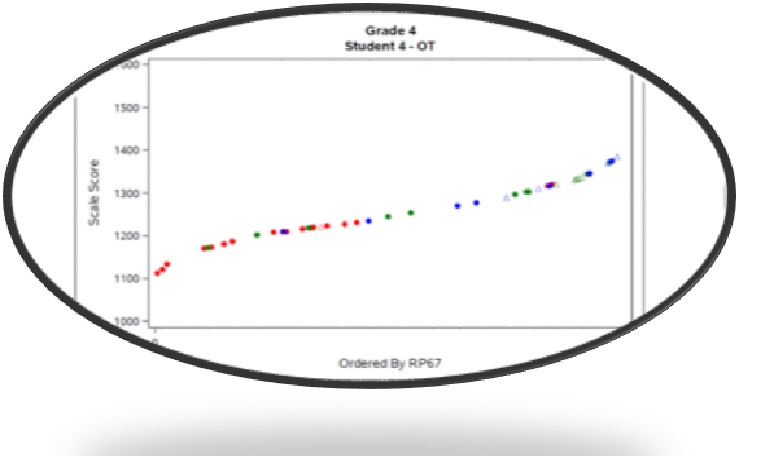

Instructionally useful assessments have score interpretations that are reasonably true. In this graphic you are looking at items along a test scale and if a student got items correct (dot) or incorrect (triangle). We should be seeing a pattern of solid red, solid green, and solid blue dots or triangles if the items aligned to progressions work correctly. Below we see the earlier stage of the progression in red is functioning similarly to our expectations, but in the messy middle, and the advanced stage the items-to-aligned progressions intermix, meaning we’re not giving accurate information to teachers about what students in the middle and at the high end of the scale know and can do.

If you do not have evidence that fixing this issue is useful and has value to stakeholders, you likely do not have convincing evidence for other parts of your organization who have different pain points. Therefore, it is important for the organization to determine if business as usual is delivering the same potential value to stakeholders as the proposed innovation.

- What is the evidence to support the innovation is useful and has value to stakeholders?

- What is the evidence that business as usual is delivering the same potential value as the innovation?

If you have these differing points of view, is it worth it to devote 2% to 3% of a projected project’s budget to empirically answer such questions before you start building your road map? Or is it better to spend money creating a solution that may not solve fundamental issues in the industry? Market research can inform decisions about where to place resources.

Gathering Evidence from the Field

I am going overview a study I did with a vice president of product management and a research associate where we looked at this problem together, in particular for creating an NGSS-based science interim assessment. Together, we surveyed a nationally representative sample of buyers and users. In our study buyers were district stakeholders who contribute to the decision-making process of which assessments a district should buy. Users were the teachers who are tasked with taking the assessment data and changing instruction.

What we found is that both district stakeholders and teachers believe that assessment design should be based on research evidence about how students learn, and, for reporting, feedback on student achievement should be based on the actual item content that individual students took, much like teachers think about a classroom assessment. This is particularly important for understanding where a child is in a learning progression. We learned that 73% of buyers found a system that provides scale scores and validated learning progressions to be the most important quality based on the questions we posed, and that they believed that implementing the learning progressions as part of reporting could support not only next steps for instruction but also help teachers better understand how to differentiate content for students.

Eighty-nine percent of buyers indicated that it was important that items be designed using research evidence about how students learn. For example, having students write such as in constructed-response items helps students transfer their learning into their long-term memory. It is our job to help put such research into practice, so assessments not only are a measure of learning but also support learning.

Now let’s look at if business as usual is worth maintaining. Only 20% of teachers support maintaining business as usual. (Recall this survey related to science and NGSS implementation).

Conversely, we found that 73% of buyers desired an assessment that delivered both scale scores and validated learning progressions. Thus, we have support for implementing our innovative framework throughout the test development cycle! Buyers just need to ask for this framework by name if they want the measurement industry to move faster.

What is next?

Personally, for myself (and perhaps for other principled assessment designers), I cannot just call victory and begin to implement this process without addressing content expert pain points. We need additional innovations layered into the process. How do we make it efficient for content experts to iterate in ways that incorporate feedback loops, from a systems perspective, in places where items are easier than expected or more difficult? What are the innovations that need to occur with reporting systems that will allow teachers to visualize the dynamic entry point into the learning progressions a student likely needs next to grow? Mastery of standards means students have integrated content from other standards at levels of complexity in particular contexts. Within a unit of instruction, students need adaption of tasks to the next higher stage of learning to grow. We need to support teachers by being more transparent in what growth to proficiency looks like so they can provide students sufficient and “just right” opportunities to learn. Market research provides evidence from the field that assessment buyers and users desire that we help them work more efficiently to support student learning.

*Schneider, M. C. & Lewis D. (2022). Persistently Embedded Item-RALD Alignment: An Instructionally Supportive Model for Test Development. Presentation at the 2022 annual meeting of the National Conference on Student Assessment (June).

Leave a comment